I spent two weeks building an AI tool for ADHD cognitive accessibility, and it’s changed how I work. But the story starts in 1998.

When I was twelve, my dad brought home a copy of Dragon NaturallySpeaking. The box promised you could talk to your computer and it would type what you said. In practice, you spent twenty minutes “training” the software by reading passages into a headset microphone, and then it would transcribe about 60% of your words correctly, replacing the rest with what felt like random selections from a phone book. I loved it. Not because it worked, but because the idea worked: the computer should adapt to you, not the other way around.

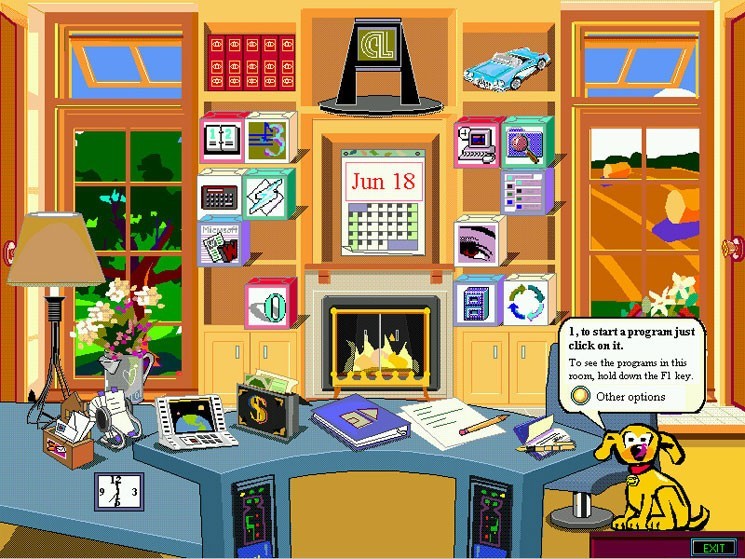

I think about Dragon a lot these days. Also Microsoft Bob, which tried to make your entire desktop feel like a living room. Also Clippy, who everybody hated but who was trying to do something I’ve come to appreciate: watch how you used a tool and offer help based on what it thought you needed. Clippy’s problem wasn’t the concept. In 1997, “artificial intelligence” meant a set of if/then rules wearing a cartoon paperclip costume. The technology couldn’t deliver on the promise.

Twenty-seven years later, it can. And I’ve been trying to figure out what to do with that.

The cognitive accessibility gap

Here’s something I noticed when I started poking at this. The accessibility tools I know best are the ones for people who can’t see screens, can’t hear audio, can’t use a mouse. WCAG, the web accessibility standard, has been around for twenty years, and it’s made the internet meaningfully better for millions of people.

But there’s another kind of disability I hadn’t seen addressed the same way: the kind where your eyes work fine, your hands work fine, and your brain just processes the information differently than the interface expects. Turns out the W3C has a task force working on this, which I only found out later (they call it COGA, for Cognitive and Learning Disabilities Accessibility). They’ve identified eight objectives, things like “help users focus,” “minimize cognitive load,” and “support adaptation.” If you read that list without context, you’d swear they were writing a product brief for a well-configured AI assistant. But COGA’s guidance is about static web design. Consistent layouts. Clear labels. Predictable navigation.

You can make a button bigger. You can simplify a form. What you can’t do with a static guideline is restructure how a paragraph delivers its information based on how a specific person’s brain actually processes it. That’s a different problem. And it’s the one I wake up inside every morning.

The expense report problem

I have inattentive-presenting ADHD. Got the diagnosis as an adult, which makes me boringly typical: over half of the roughly 15.5 million American adults with ADHD found out after they turned eighteen. Globally, somewhere between 366 and 404 million adults, depending on which meta-analysis you trust. More people than bipolar, PTSD, OCD, and panic disorder.

When I tell people I have ADHD, they tend to assume it means I can’t pay attention. For a long time I thought that too. But it’s more like saying a car with a bad transmission “can’t go fast.” The engine’s fine. The part that converts the engine’s output into motion is unreliable. Russell Barkley, the researcher who basically wrote the modern clinical understanding of adult ADHD, calls it a disorder of performance, not knowledge. I know what I should do. I almost always know how to do it. The executive function system that converts knowing into doing sometimes just doesn’t catch.

What that actually looks like

A couple of weeks ago, I spent six uninterrupted hours building a schema for a side project. Deep focus. Flow state. Lost track of time completely. That same day, I could not make myself open the tab to submit an expense report that would have taken ten minutes. The expense report was objectively more important (it was money owed to me). But my brain doesn’t run on importance. It runs on interest, urgency, novelty, and competition. Thomas Brown’s research on executive function activation figured this out years ago: the ADHD brain isn’t broken uniformly. It’s situationally variable. Important doesn’t activate it. Interesting does.

So this creates a specific, predictable set of problems with how I consume information. My working memory holds fewer items and drops them faster than it should. When someone sends me a message that ends with three questions, I’ve forgotten the first one by the time I finish reading the third. When someone presents me with five options and no recommendation, I freeze, because evaluating and choosing between options is itself an executive function task, and that’s the exact system that misfires.

When I started using AI assistants, the responses tended to default to a shape that didn’t work for me. Thorough. Well-organized. Balanced. Five paragraphs of context before the recommendation. Three options, each with pros and cons, no indication of which one the AI would actually pick. For my wiring, that’s not helpful information. That’s a wall of gray noise shaped like helpful information.

So I taught the computer how my brain works

Remember Dragon NaturallySpeaking? You trained it by reading to it. Same idea, different century.

Claude, the AI I use most, has a feature called skills: persistent instruction sets that change how the AI behaves across every conversation. Not a prompt you retype each time, but a background configuration that activates automatically based on context. I spent about two weeks building what I started calling an ADHD operating system. Not an app. Not a chatbot personality. About 10,000 words of behavioral modification across seven files, grounded in the clinical literature on how ADHD cognition actually works, telling the AI how to restructure its output for a brain like mine.

It runs in the background of everything I do now. Here’s what actually changed.

How the AI talks to me now

The AI gives me the answer first. Always. Conclusion, recommendation, action item, then context and rationale afterward. This sounds like a minor formatting preference until you understand why it matters. If my executive function gives me an unpredictable window of attention, the most critical information needs to land while the window is open. That’s sentence one, not paragraph four. I didn’t invent this idea; Barkley’s research on performance variability reads like a design spec for it. I just hadn’t seen anyone translate it into instructions an LLM could follow.

The AI asks me one question at a time. Before I built the skill, Claude would routinely end a message with two or three questions. Reasonable! Efficient! Except that answering question three requires holding questions one and two in working memory while I formulate a response, and working memory is the exact cognitive system that doesn’t work right. Now the AI either asks one thing or gives me tappable options. Sounds small. Changed everything.

The AI picks for me and tells me why. When there are multiple approaches to a problem, it recommends one, explains the reasoning, and notes what you’d trade off with the alternatives. “Here are three options” with no recommendation is a recipe for what clinicians call decision paralysis and what I call “staring at my screen for twenty minutes then closing the laptop.” The AI does the prioritizing. I can disagree with it, but I don’t have to generate the ranking from nothing, which is the part my brain skips.

The deeper changes

The AI never tells me to try harder. This is the design philosophy underneath the whole thing. When I say “I can’t make myself do X,” the skill reframes: what environmental change, tool, or external structure would make X happen without relying on motivation? I did not come up with this framing; it’s straight out of Barkley’s work. Telling someone with ADHD to “just do it” is like telling someone with glasses to “just see better.” The treatment isn’t effort. It’s a different lens. The skill makes the AI think in terms of lenses.

The AI tells me how long things take. ADHD comes with a symptom called time blindness, which means I cannot reliably estimate duration. Not “I’m bad at it.” I structurally cannot do it. Ten minutes and two hours feel roughly the same in prospect. So my skill requires time estimates on any multi-step plan, padded by 50%. Cognitive prosthesis, in the most literal sense: the tool provides a function my neurology does not.

The AI expects me to abandon systems. This is the one that surprised me most. Every productivity tool, every habit, every organizational scheme I’ve tried has had a shelf life. The novelty wears off, the dopamine dries up, the system stops working. Most tools treat this as user failure. My skill treats it as expected behavior. When I report that I’ve stopped using something, the AI helps me figure out what was working, what to keep, and how to iterate without the guilt spiral. Because for me, one of the most exhausting things about ADHD isn’t that systems fail. It’s that I feel like I failed every time one does.

Why ADHD cognitive accessibility needs more than a prompt

I can hear the objection from here. “That’s just a system prompt with extra steps.” And sure, you could type “respond concisely, lead with action items, give one recommendation” and get a vaguely similar effect for one conversation.

But a prompt doesn’t know that code comments should explain why, not what, because the ADHD eye skims code and rebuilds context from comments after attention drifts. A prompt doesn’t know that career conversations need to account for rejection sensitivity as a neurological symptom. A prompt doesn’t know that when recommending a daily routine, medication timing and energy curves matter, and that rigid systems need built-in flexibility because I’ll remodel them in three weeks anyway.

It turns out I wasn’t the only person circling this idea. When I went looking for research after I’d already built the thing, I found a 2024 study, “Exploring Large Language Models Through a Neurodivergent Lens”, that looked at how neurodivergent users were already sharing custom prompts in online communities, jury-rigging AI to work with their brains. One ADHD user asked for help with “organization, planning, and prioritizing tasks,” which is literally just a list of executive function deficits with a hopeful tone. The researchers recommended that LLMs ship with built-in neurodivergent-friendly prompt templates.

I think they’re pointing at the right problem, though I’m not sure templates go far enough. What I’ve been finding is that my brain needs something more like a persistent behavioral layer that understands why the formatting matters and adapts across contexts. Think of it as the difference between a ramp and a guide dog. Both are accessibility tools. One of them thinks.

The research is catching up to the users

A team at KTH Royal Institute of Technology tested ChatGPT as assistive technology for ADHD reading comprehension and found something that made me laugh: comprehension actually decreased for first-time users, but improved for people who already knew how to work with the tool. The punchline writes itself. Of course it got worse before it got better. That’s how every ADHD accommodation I’ve ever tried has worked. The learning curve is the tax you pay before the tool starts earning its keep.

At HCII 2025, researchers tested LLM-based conversational characters designed specifically for adult ADHD and found measurable improvements in attention and emotional regulation when the AI’s language style was tuned for the population. Not the content. The style. How information was delivered changed how the brain processed it. I’d been doing this intuitively for months before I read the paper, and seeing it in a controlled study was the kind of vindication you don’t get very often when you’re building things on instinct and going looking for the science later.

The Safren model of CBT for adult ADHD (the most validated behavioral intervention for the condition, 56% response rate with medication versus 13% on meds alone) has four modules: organizing and planning, reducing distractibility, adaptive thinking, and procrastination management. My skill implements rough analogs of all four, which I didn’t realize until I read the paper. Not as therapy. I want to be careful about that distinction. As environment design. The AI doesn’t treat my ADHD. It just restructures the information environment so that my existing medication and coping strategies work better.

Thirty years of almost

Here’s what I keep coming back to. For thirty years, the accessibility tools I’ve known about have mostly worked by making the container follow a standard. A screen reader serves every blind user in roughly the same way, and that works because the accommodation is consistent.

Cognitive accessibility seems to resist that approach. My ADHD is different from your ADHD. The ADHD brain that needs absolute brevity is different from the one that needs extensive context to feel safe making a decision. Autism, dyslexia, traumatic brain injury, age-related cognitive decline: each one creates a different information processing pattern. Static guidelines can set a floor. They can’t personalize.

LLMs can. The technology is adaptive in a way that web design is not. The COGA objectives read less like a feature list for LLM developers to implement and more like a description of what LLMs already do when someone takes the time to explain how their brain works.

The part I’m still thinking about is what to do with that. The defaults are optimized for a generic neurotypical user. Something like 15 to 20% of the global population is neurodivergent, which is a lot of brains being served a generic configuration. I built mine in about two weeks because I’m a technical person with fifteen years of IT experience, so the tooling was familiar. But I don’t think anyone should have to write 10,000 words of behavioral modification instructions grounded in clinical research just to get an AI to communicate with them. The technology exists for AI systems to offer cognitive accessibility profiles the way your phone offers font size settings. I haven’t found anyone building that as a real feature yet. Maybe they will. Maybe someone reading this will.

The twelve-year-old was right

Dragon NaturallySpeaking was terrible software that contained a correct idea. Clippy was a bad execution of a good insight. Microsoft Bob was an ambitious, weird failure at making the computer feel like it knew you. Every one of those products was limited by the technology of its time. If/then rules can’t understand a human brain. LLMs can get closer than anything I’ve seen.

I keep a copy of my ADHD skill on GitHub. If you have ADHD, or autism, or dyslexia, or any cognitive difference at all, I’d encourage you to try building your own. Not because it’s easy (the tools aren’t there yet for most people), but because the act of telling an AI how your brain works, and watching it actually listen, is the closest I’ve come to that feeling I had at twelve years old, standing in my dad’s office, reading the back of a software box that promised the computer would learn to understand me.

It took twenty-seven years. The computer can finally get there, if you’re willing to tell it what you need.